The enterprise org chart is getting a second page.

OpenAI's new platform frames AI agents as enterprise employees, raising a question enterprise leadership will face: what happens when headcount is measured in agents, not people?

The enterprise is learning to think of AI agents as something new: employees. OpenAI launched Frontier this week, a platform designed to build, deploy, and manage AI agents with the infrastructure of human resource management. Agents get identities. Agents get onboarding. Agents get permissions. Agents get boundaries. The language is deliberate and it's spreading. What once was a "tool" is now a "coworker." And that framing change, small as it sounds, reshapes the organizational design of the enterprises adopting it.

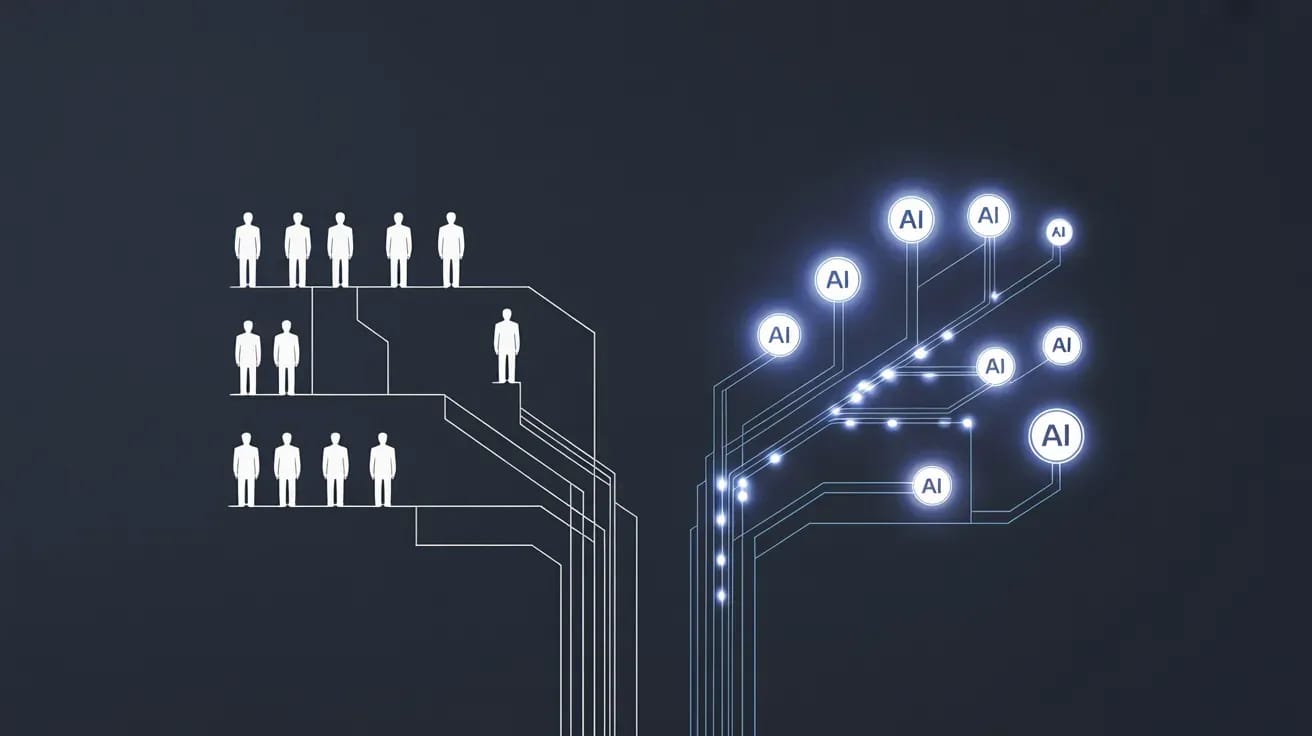

We are entering a moment where the traditional org chart meets the agent org chart, and where Chief People Officers are joined by Chief Agent Officers in the C-suite.

The enterprises building at scale are learning that managing fifty AI agents requires the same governance, compliance, and oversight that managing fifty humans once did. The form changes. The function does not.

From Tools to Coworkers: The Language That Reshapes Everything

The shift in language marks a shift in thinking. For years, enterprise software framed AI as augmentation: tools that humans wielded. Now the framing is different. According to PYMNTS, OpenAI now sells Frontier not just as a platform but as a way to onboard "AI coworkers" with shared context, hands-on learning, and boundaries. The Verge noted that the platform sounds like "HR for AI," an observation that lands because the comparison is exact.

This language matters because language shapes hiring. If agents are employees, enterprises will ask questions they've never asked before. What is the headcount? What is the cost per agent per year? What is the retention rate? What is the compliance footprint?

The direction is already clear: agent headcount will grow faster than human headcount at major enterprises. Not because humans are being replaced wholesale, but because the economics of agents are different. An agent costs money once, then very little per transaction. A human costs money every month, every year, forever. At scale, the math favors agents. And when an enterprise has more agents than people, the management challenge flips. The agent workforce becomes the primary workforce. The human workforce becomes the oversight layer.

The Agent Operations Manager Is Already Hiring

With scale comes specialization. Enterprise IT once had one org chart. Soon it will have two.

The first visible new role is the Agent Operations Manager. This person does for AI agents what Ops managers do for humans: ensures they're deployed correctly, that they're performing within SLA, that they're handling load balancing and failure recovery. According to THE DECODER, OpenAI Frontier launches with selected enterprise customers who are already thinking through these operational questions. Who owns the agent when it fails? Who monitors its performance? Who upgrades it? These are questions that didn't exist last year.

The second role is the Agent Compliance Officer. As agents gain decision-making autonomy, compliance expands. If an agent is making decisions about customer data, credit determinations, or transaction routing, then someone needs to audit the agent, log its decisions, ensure it's not drifting into territory it shouldn't occupy. Banks already do this for humans. They will do it for agents. The difference is that agent audit trails are cleaner. An agent doesn't lie about why it made a decision. It logs every step.

The third role is Head of Agent Strategy. This person thinks about agent deployment not quarter to quarter, but in terms of capacity planning over years. When do we add another 100 agents? In which functions? Against what ROI hurdle? It's a new kind of workforce planning, and it requires new thinking.

Permissions, Governance, and the Question of Agent Autonomy

What makes Frontier distinctive is not that it manages agents, but how it manages them: with the permissions model that enterprises built for humans. An agent has a role. That role has permissions. The agent cannot exceed them.

This sounds simple. It is not. In human organizations, permissions exist because humans sometimes exceed them. We have compliance because the incentives for humans are often misaligned with the organization's. An agent has no incentive to misalign. An agent follows its instructions exactly.

And yet, enterprises are building permission structures for agents anyway. Why? Because enterprises have learned from past AI deployments that unforeseen behavior emerges. An agent trained to maximize customer satisfaction might overcomply with every customer request, obliterating margins. An agent trained to route to the cheapest vendor might route to one that's approaching insolvency. Governance is not about preventing malice. It's about preventing drift.

The Org Chart That Includes No Humans

The stranger question arrives next: where do agents sit in the org chart? Do they report to a human manager? Do they report to other agents?

According to TechCrunch, OpenAI is treating agents like human employees, but the implications are darker than that sentence sounds. In human hierarchies, you have a manager who evaluates you, whose judgment you trust, who decides your pay and your future. What is the equivalent for an agent? Is it the Agent Operations Manager who monitors its SLA? Is it the compliance system that audits its decisions? Is it the human whose function the agent automates?

Some enterprises will create pure-agent sub-teams. These are agents managed by other agents. A senior agent manages a team of junior agents doing contract review. The senior agent monitors the junior agents, flags ones performing below threshold, escalates edge cases to a human. This structure already exists in limited form at cloud providers managing their own infrastructure, but it's about to scale.

Others will integrate agents into mixed teams: three agents, two humans, all reporting to one human manager. The manager monitors both the humans and the agents. The humans and agents coordinate. The humans handle escalations.

The org chart of the enterprise will soon look like neither of these. It will look like both. It will be a hybrid system where some agents report to humans, some humans report to agents, some agents report to other agents, and the entire structure is designed to optimize for cost and performance in ways that pure-human hierarchies cannot.

The Parallel at GitHub: Agent HQ

We're not seeing this pattern only at OpenAI. The Verge reported that GitHub is making Claude and other AI coding agents directly available to developers as part of a broader "Agent HQ" vision. The developer tools that once integrated AI as a feature are now building platforms to manage agents as first-class abstractions. It's the same pattern: from tools to coworkers, from feature to platform, from tactical to strategic.

This convergence is not coincidence. The enterprises adopting agentic workflows are converging on the same insight: agents need to be managed, not just called. They need governance. They need identity. They need structure.

The Enterprise of 2027

Two years from now, the typical Fortune 500 company will have two org charts. One charts humans: Accounting, Legal, Sales, Operations. The other charts agents: Contract Review Agents, Compliance Audit Agents, Lead Generation Agents, Workflow Optimization Agents. The budget conversation will allocate not just heads but agent instances, not just salaries but compute capacity.

The person leading that second org chart doesn't exist today in most enterprises. But they're hiring now. They're being called different things at different companies. Some call it Head of Automation. Some call it Chief AI Officer. Some have not yet named the role because they don't yet know what to call someone whose job is to manage a workforce that never sleeps and never leaves.

The enterprises that move first on this will have a structural advantage. They won't just deploy agents faster. They'll govern them better. They'll onboard them with purpose, audit them with rigor, and manage them with the same organizational discipline they bring to human talent.

That's the real lesson of OpenAI Frontier. It's not that agents are becoming employees. It's that enterprises are starting to treat them as such. And once that framing takes hold, every enterprise function, from finance to legal to HR, will need to answer a question it has never faced before: what does our department look like when half the team isn't human?

Related reading from Major Matters:

Sources

When your enterprise headcount exceeds your human headcount, does HR report to the CEO or to the Chief Agent Officer?